Exponential growth of datapoints used to train notable AI systems

What you should know about this indicator

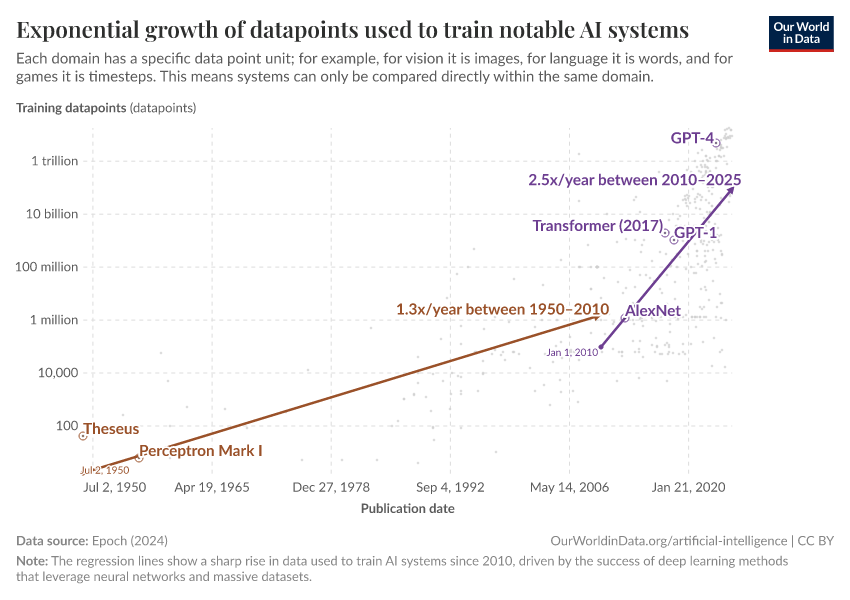

- Training data size measures the volume of unique examples used to train an AI model during its learning phase. It represents the total number of distinct data points the model learns from, counted only once regardless of how many times they're seen during training.

- To understand this concept, imagine teaching someone to identify different bird species. Each unique bird photo you show them is one piece of training data. If you show 100 different photos, your training data size is 100, even if you review those same photos multiple times.

- Since datasets vary by domain, there's no universal unit for measuring size. Text models might count tokens, image models count pictures, and video models count clips. Epoch AI typically uses the smallest unit that triggers a model update during training. For language models that predict the next word, this would be individual tokens.

- Training data size directly impacts model performance. Larger datasets enable deeper learning and more nuanced pattern recognition, allowing models to identify subtle distinctions and handle diverse real-world scenarios more effectively.

Related research and writing

More Data on Artificial Intelligence

Sources and processing

This data is based on the following sources

How we process data at Our World in Data

All data and visualizations on Our World in Data rely on data sourced from one or several original data providers. Preparing this original data involves several processing steps. Depending on the data, this can include standardizing country names and world region definitions, converting units, calculating derived indicators such as per capita measures, as well as adding or adapting metadata such as the name or the description given to an indicator.

At the link below you can find a detailed description of the structure of our data pipeline, including links to all the code used to prepare data across Our World in Data.

Reuse this work

Citations

How to cite this page

To cite this page overall, including any descriptions, FAQs or explanations of the data authored by Our World in Data, please use the following citation:

“Data Page: Exponential growth of datapoints used to train notable AI systems”, part of the following publication: Charlie Giattino, Edouard Mathieu, Veronika Samborska, and Max Roser (2023) - “Artificial Intelligence”. Data adapted from Epoch AI. Retrieved from https://archive.ourworldindata.org/20260518-082431/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.html [online resource] (archived on May 18, 2026).How to cite this data

In-line citationIf you have limited space (e.g. in data visualizations), you can use this abbreviated in-line citation:

Epoch AI (2026) – with major processing by Our World in DataFull citation

Epoch AI (2026) – with major processing by Our World in Data. “Exponential growth of datapoints used to train notable AI systems” [dataset]. Epoch AI, “Parameter, Compute and Data Trends in Machine Learning” [original data]. Retrieved June 2, 2026 from https://archive.ourworldindata.org/20260518-082431/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.html (archived on May 18, 2026).Download

Quick download

Download the data shown in this chart as a ZIP file containing a CSV file, metadata in JSON format, and a README. The CSV file can be opened in Excel, Google Sheets, and other data analysis tools.

Data API

Use these URLs to programmatically access this chart's data and configure your requests with the options below. Our documentation provides more information on how to use the API, and you can find a few code examples below.

Data URL (CSV format)

https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.csv?v=1&csvType=full&useColumnShortNames=falseMetadata URL (JSON format)

https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.metadata.json?v=1&csvType=full&useColumnShortNames=falseExcel / Google Sheets

=IMPORTDATA("https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.csv?v=1&csvType=full&useColumnShortNames=false")Python with Pandas

import pandas as pd

import requests

# Fetch the data.

df = pd.read_csv("https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.csv?v=1&csvType=full&useColumnShortNames=false", storage_options = {'User-Agent': 'Our World In Data data fetch/1.0'})

# Fetch the metadata

metadata = requests.get("https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.metadata.json?v=1&csvType=full&useColumnShortNames=false").json()R

library(jsonlite)

# Fetch the data

df <- read.csv("https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.csv?v=1&csvType=full&useColumnShortNames=false")

# Fetch the metadata

metadata <- fromJSON("https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.metadata.json?v=1&csvType=full&useColumnShortNames=false")Stata

import delimited "https://ourworldindata.org/grapher/exponential-growth-of-datapoints-used-to-train-notable-ai-systems.csv?v=1&csvType=full&useColumnShortNames=false", encoding("utf-8") clear