How is literacy measured?

The chart below shows global literacy rates among adults since 1800. This is a powerful graph: it tells us that over the last two centuries the share of illiterate adults has gone down from 88% to less than 14%.

This global perspective on education leads to a natural question: What does it actually mean that a person is ‘literate’ in these international statistics?

Literacy rates are only a proxy for what we actually care about, namely literacy skills. The distinction matters because literacy skills are complex and span over a range of proficiency shades, while literacy rates assume a sharp, binary distinction between those who are and aren’t ‘literate’.

What is the definition of literacy that underlies the estimates in the chart? And how do these estimates compare to other measures of educational achievement and literacy skills?

In this post we answer these questions. We begin with an overview of recent estimates published by UNESCO, and then move on to discuss long-run estimates that rely on historical data.

Measurement today

Methodologies for measuring literacy

Let's start by taking a look at recent estimates of literacy. Specifically, the estimates of literacy rates compiled by UNESCO from different sources.

In the chart we present a breakdown of these estimates, showing the main methodologies that countries use to measure literacy, and how these have changed over time. (To explore changes across time use the slider underneath the map.)

The breakdown covers four categories: self-reported literacy declared directly by individuals, self-reported literacy declared by the head of the household, tested literacy from proficiency examinations, and indirect estimation or extrapolation.

In most cases, the categories covering 'self-reports' (green and orange) correspond to estimates of literacy that rely on answers provided to a simple yes/no question asking people if they can read and write.

The category 'indirect estimation' (red) corresponds mainly to estimates that rely on indirect evidence from educational attainment, usually based on the highest degree of completed education.

This chart is telling us that:

- There is substantial cross-country variation, with recent estimates covering all four measurement methods.

- There is variation within countries across time (e.g. Mexico switches between self-reports and extrapolation).

- The number of countries that base their estimates on self-reports and testing is increasing.

Data sources for measuring literacy

Another way to dissect the same data, is to classify literacy estimates according to the type of measurement instrument used to collect the relevant data. Which countries use household sampling instruments such as UNICEF's Multiple Indicator Cluster Surveys? Which countries use census data? And which countries do not collect literacy data directly, but rely instead on other sources?

In the chart we explore this, splitting estimates into three categories: sampling, including data from literacy tests and household surveys; census data; and other instruments (e.g. administrative data on school enrollment).

Here we can see that most countries use sampling instruments (coded as 'surveys' in the map), although in the past census data was more common. Literacy surveys have the potential of being more accurate – when the sampling is done correctly – because they allow for more specific and detailed measurement than short and generic questions in population censuses. Below we discuss this further.

Data quality: Challenges and limitations

As mentioned above, recent data on literacy is often based on a single question included in national population censuses or household surveys presented to respondents above a certain age, where literacy skills are self-reported. The question is often phrased as "can you read and write?". These self-reports of literacy skills have several limitations:

- Simple questions such as "can you read and write?" frame literacy as a skill you either possess or do not when, in reality, literacy is a multi-dimensional skill that exists on a continuum.

- Self-reports are subjective, in that the question is dependent on what each individual understands by "reading" and "writing". The form of a word may be familiar enough for a respondent to recall its sound or meaning without actually ‘reading’ it. Similarly, when writing out one’s name to convey written ability, this can be accomplished by ‘drawing’ a familiar shape rather than writing in an effort to produce a written text with meaning.

- In many cases surveys ask only one individual to report literacy on behalf of the entire household. This indirect reporting potentially introduces further noise, in particular when it comes to estimating literacy among women and children, since these groups are less often considered 'head of household' in the surveys.

Similarly, inferring literacy from data on educational attainment is also problematic, since schooling does not produce literacy in the same way everywhere: Proficiency tests show that in many low-income countries, a large fraction of second-grade primary-school students cannot read a single word of a short text; and for very few people in these countries going to school for four or five years guarantees basic literacy.

Even at a conceptual level there is lack of consensus – national definitions of literacy that are based on educational attainment vary substantially from country to country. For example, in Greece people are considered literate if they have finished six years of primary education; while in Paraguay you qualify as literate if you have completed two years of primary school.1

New perspectives through standardized literacy tests

Given the limitations of self-reported or indirectly inferred literacy estimates, efforts are being made at both national and international levels to conduct standardized literacy tests to assess proficiency in a systematic way.

In particular, large cross-country assessment surveys have been developed to overcome the challenges of producing comparable literacy data. Two important examples are the Program for the International Assessment of Adult Competencies (PIAAC), which is a test used for measuring literacy mostly in rich countries; and the Literacy Assessment and Monitoring Programme (LAMP), which is a household assessment aimed at measuring literacy skills in developing countries, while remaining comparable across countries, languages, and scripts.2

The LAMP tests have only recently been field tested in four countries: Jordan, Mongolia, Palestine, and Paraguay. The PIAAC tests, on the other hand, have been administered in about 30 countries, and the results are shown in the chart.3

We only have these tests for a few countries, but we can still see that there is an overall positive correlation. Moreover, we see that there is substantial variation in scores even for countries with identical and almost perfect literacy rates (e.g. Japan vs Italy). This confirms the fact that PIAAC tests capture a related, but broader concept of literacy.

Reconstructing estimates from the past

Census data

The UNESCO definition of "people who can, with understanding, read and write a short, simple statement on their everyday life", started being recorded in census data from the end of the 19th century onwards. Hence, despite variation on the types of questions and census instruments used, these historical census data remain the best source of data on literacy for the period prior 1990.

The longest series attempting to reconstruct literacy estimates from historical census data is provided in the OECD report "How Was Life? Global Well-being since 1820", published in 2014. This is the source used in the first chart in this blog post for the period 1800 to 1990.4

How accurate are these UNESCO estimates?

The evidence suggests that the narrow concept of literacy measured in early census data provides an imperfect, yet informative account of literacy skills. The chart is a good example: As we can see, already in 1947, census estimates from the US correlated strongly with educational attainment, as one would expect.

The relationship between educational attainment and literacy over time

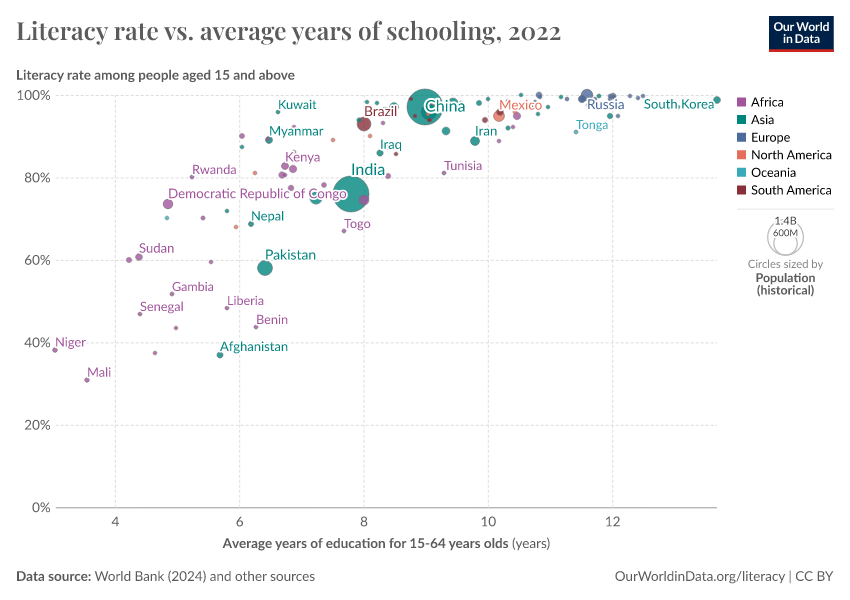

Importantly, the correlation between educational attainment and literacy also holds across countries and over time. The chart shows this by plotting changes in literacy rates and average years of schooling. Each country in this chart is represented by a line, where the beginning and ending points correspond to the first and last available observation of these two variables over the period 1970-2010. (As we mention above, before 1990 almost all observations correspond to census data.)

As we can see, literacy rates tend to be much higher in countries where people tend to have more years of education; and as average years of education go up in a country, literacy rates also go up.

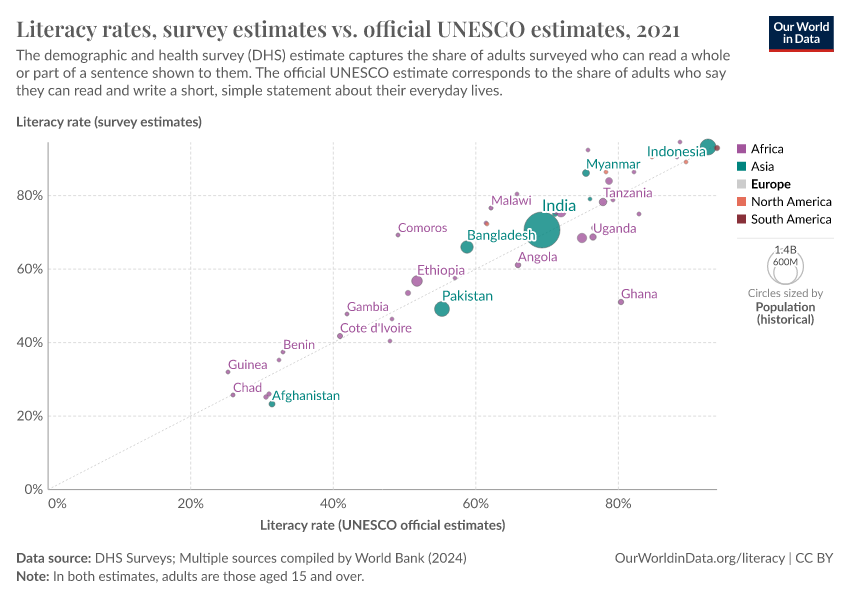

Official estimates vs one-sentence test results

Countries with high literacy rates also tend to have higher results in the basic literacy tests included in the DHS surveys (this is a test that requires survey respondents to read a sentence showed to them). As we can see in the chart, there is obviously noise, but these two variables are closely related.

Other historical sources

When census data is not available, a common method used to estimate literacy is to calculate the share of people who could sign official documents (e.g. court documents, marriage certificates, etc).5 As the researcher Jeremiah Dittmar explains, this approach only gives a lower bound of the estimates because the number of people who could read was higher than the number who could write.

Indeed, other methods have been proposed, in order to rely on historical estimates of people who could read. For example, researchers Eltjo Buringh and Jan Luiten van Zanden deduce literacy rates from estimated per capita book consumption.6 As Buringh and Van Zanden show, their estimates based on book consumption are different, but still fairly close to alternative estimates based on signed documents.

Concluding remarks

Literacy is a key skill and a key measure of a population's education. However, measuring literacy is difficult because literacy is a complex multidimensional skill.

For decades, most countries have been measuring literacy through yes/no, self-reported answers to a single question in a survey or census along the lines of "can you read and write?".

These estimates provide a basic perspective on literacy skills. They tell us something meaningful about broad changes in education in the long run, since the changes across decades are much larger than the underlying error margins at any point in time. But they remain insufficient to fully characterize literacy skills and understand challenges ahead.

As populations become more educated, we need more accurate instruments to measure abilities – asking people whether they can read and write is insufficient to meaningfully detect differences in the way people apply skills for work and life.

Efforts have been made in recent years, both at national and international levels, to develop standardized, tailor-made survey instruments that measure literacy skills more accurately through tests. Several countries already implement these tests, but we will need to wait several years for these new instruments to become the norm for measuring and reporting literacy rates internationally.

Endnotes

You find more details about this in Chapter 6 of the Education for All Global Monitoring Report (2006).

The LAMP tests three skill domains: reading continuous texts (prose), reading non-continuous texts (documents), and numeracy skills. Each skills domain is divided into three performance levels; the top 30% of respondents at Level 3, the middle 40% of respondents correspondingly at Level 2, and the bottom 30% of respondents at Level 1.

The LAMP tests have only recently been field tested. The LAMP structure in these pilots (implemented in Jordan, Mongolia, Palestine, and Paraguay) is as follows:

- Background questionnaire: collects information on the respondent’s educational attainment, self-reported literacy, literacy use in and outside of work, and occupation, amongst other information.

- Filter test: Booklet with 17 items to determine if respondent takes Module A (for low performers) or Module B (for high performers)

- Module A: Testing prose, document, and numeracy items.

- Module B: Respondent is randomly assigned Booklet 1 or Booklet 2, both testing prose, document, and numeracy skills for high performers of the filter test.

The World Bank's STEP Skills Measurement Program also provides a direct assessment of reading proficiency and related competencies scored on the same scale as the OECD's PIAAC. This adds another 11 countries to the coverage of PIAAC tests. You can read more about it here: http://microdata.worldbank.org/index.php/catalog/step/about

The OECD report relies on a number of underlying sources. For the period before 1950, the underlying source is the UNESCO report on the Progress of Literacy in Various Countries Since 1900 (about 30 countries). For the mid-20th century, the underlying source is the UNESCO report on Illiteracy at Mid-Century (about 36 additional countries). And up to 1970, the source is UNESCO statistical yearbooks.

Since estimates of signed documents tend to rely on small samples (e.g. parish documents from specific towns), researchers often rely on additional assumptions to extrapolate estimates to the national level. For example, Bob Allen provides estimates of the evolution of literacy in Europe between 1500 and 1800 using data on urbanization rates. For more details see Allen, R. C. (2003). Progress and poverty in early modern Europe. The Economic History Review, 56(3), 403-443.

They use a demand equation that links book consumption to a number of factors, including literacy and book prices. For more details see Buringh, E. and Van Zanden, J.L., 2009. Charting the “Rise of the West”: Manuscripts and Printed Books in Europe, a long-term Perspective from the Sixth through Eighteenth Centuries. The Journal of Economic History, 69(2), pp.409-445.

Cite this work

Our articles and data visualizations rely on work from many different people and organizations. When citing this article, please also cite the underlying data sources. This article can be cited as:

Esteban Ortiz-Ospina and Diana Beltekian (2018) - “How is literacy measured?” Published online at OurWorldinData.org. Retrieved from: 'https://archive.ourworldindata.org/20260518-093348/how-is-literacy-measured.html' [Online Resource] (archived on May 18, 2026).BibTeX citation

@article{owid-how-is-literacy-measured,

author = {Esteban Ortiz-Ospina and Diana Beltekian},

title = {How is literacy measured?},

journal = {Our World in Data},

year = {2018},

note = {https://archive.ourworldindata.org/20260518-093348/how-is-literacy-measured.html}

}Reuse this work freely

All visualizations, data, and articles produced by Our World in Data are completely open access under the Creative Commons BY license. You have the permission to use, distribute, and reproduce these in any medium, provided the source and authors are credited.

The data produced by third parties and made available by Our World in Data is subject to the license terms from the original third-party authors. We will always indicate the original source of the data in our documentation, so you should always check the license of any such third-party data before use and redistribution.

All of our charts can be embedded in any site.